DeepSeek sparse attention is being tested in public: the company has released an experimental sparse‑attention model aimed at dramatically lowering the cost of long‑context interactions—and it is shipping that architecture inside a consumer chatbot. By long‑context, we mean multi‑document chats and multi‑turn sessions that keep tens of thousands of tokens in play. If the model’s cost savings hold amid messy, real‑world usage, the reference price for “memory‑heavy” features could fall across consumer apps and developer APIs, with knock‑on effects for pricing and product design (see TechCrunch’s model report and companion coverage of the app rollout).

Architecture and Training: How Sparse Attention Lowers Long‑Context Costs

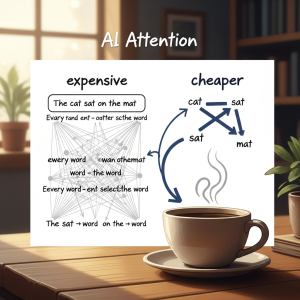

Transformer attention scales roughly with the square of sequence length; doubling context multiplies work. Sparse attention restructures that computation by attending densely to a smaller, strategically chosen set of tokens—often a blend of local windows for recency and periodic global anchors—while handling the remainder more cheaply. DeepSeek frames its approach as tuned for long‑context workloads, explicitly targeting inference economics without collapsing coherence across sprawling inputs (TechCrunch’s cost claim).

The key challenge is routing: deciding which tokens to elevate when visibility is pruned. Training matters here. Models that experience sparsity during training can learn to place salient information where attention remains dense and to use globally tagged positions that persist across layers. Public reporting focuses more on the inference cost goal than on pretraining specifics, but the stability of long‑context behavior will ultimately reflect whether sparsity was baked into the training regime rather than flipped on at serve time (TechCrunch on the consumer deployment).

Under the hood, memory locality matters as much as math. Long dialogs and document threads stress key‑value (KV) caches and high‑bandwidth memory; poorly tuned models see tail latency spike as caches grow. A well‑structured sparse pattern slows cache growth, keeps hot tokens resident, and reduces p99 hiccups. That operational difference is what separates a clever benchmark from a usable product. For a deeper primer on these mechanics and trade‑offs, see our explainer on sparse attention and long‑context costs.

What’s New in DeepSeek’s Sparse Attention Deployment

Two aspects stand out. First, the architectural claim is tied directly to a consumer app that invites messy input—multifile uploads, cross‑document requests, follow‑ups, and retrieval hops—rather than only curated benchmarks. Second, DeepSeek is treating inference efficiency as a first‑class product feature, not just an internal metric. That posture compresses the feedback loop between routing choices and user‑perceived quality, allowing the team to tune sparsity patterns based on how people actually use context (TechCrunch’s paired coverage).

Scaling and Compute: The Inference Costs That Matter

Training headlines grab attention, but the day‑to‑day economics live in the serve stack. The most expensive sessions are the long ones: KV caches stay hot, attention costs explode without structure, and memory movement dominates. DeepSeek’s claim goes right at that tier: for extended contexts, its sparse architecture can cut API costs roughly in half versus dense attention baselines (TechCrunch’s model report).

Consider a typical workflow: a user uploads two reports, a slide deck, and a transcript, then asks for cross‑document synthesis and follow‑on analysis. Dense attention pays for many needless token‑to‑token interactions. A sparse path prunes those interactions while maintaining anchors for discourse and recency. If done well, the result is fewer floating‑point operations, steadier memory footprints, and more predictable latency at p95 and p99. That predictability is what enables generous long‑context allowances in consumer plans without blowing up margins. For broader implications and SKU design, see our coverage of DeepSeek’s cost‑first bet.

Real‑World Evaluation: From Needle‑in‑a‑Haystack to Everyday Chat

Synthetic tests can probe recall—planting a sentence in a long document and checking whether the model retrieves it—but live usage is messier: mixed formats, follow‑ups that reframe the task, and retrieval hops that redefine what “context” even means. Shipping the architecture inside a mainstream chatbot creates a production‑scale evaluation protocol: do answers stay grounded as sessions stretch, does the model resist repetition, and how often do users need to retry to get a coherent outcome (TechCrunch on the app)?

Sparse attention has recognizable failure modes. Important references can fall outside dense bands and be replaced by confident but invented connective tissue. Layout‑heavy PDFs and code diffs can scramble token salience; multilingual switches can break heuristics that favor local recency. The practical question is error cost: do mistakes surface in places where they can be reviewed (summaries, synthesis) or in high‑stakes reasoning where subtle misreads are unacceptable?

For buyers evaluating long‑context options, three pragmatic checks keep assessments grounded:

- Stability across extended, multi‑document sessions without a spike in retries

- Predictable tail latency as context grows toward your typical upper bounds

- Pricing and quotas that make persistent memory and larger uploads viable for daily use

Safety and Governance: Alignment in Extended Sessions

Cheaper long‑context chat widens usage: more uploads, more persistent memory, and longer back‑and‑forth. That raises governance questions. Alignment strategies and content filters are usually validated on short prompts; over extended sessions, subtle miscalibration can accumulate. TechCrunch frames DeepSeek’s release as an experiment that prioritizes inference efficiency inside a consumer interface, implying the company can tune access tiers and moderation as behavior is observed (app coverage).

Enterprises will ask where telemetry is stored, how long it is retained, and whether persistent memory can be scoped per workspace or per user. They will probe how moderation handles long‑running contexts, whether on‑device processing is used, and how opt‑out controls work for training. Jurisdiction and retention policies will determine whether cost advantages translate into enterprise procurement, especially in regulated sectors.

Competitive Dynamics: Pricing Pressure and Feature Unlocks

If the “half the cost” headline holds in production for extended contexts, incumbents will be pressed to reprice long windows and to introduce their own sparse or hybrid attention paths. Expect the battleground to shift from raw context length to “smart context”: a combination of routing, cache management, and retrieval‑aware kernels that preserve quality while keeping spend bounded. That, in turn, enables features that have felt like luxuries—document chat with larger uploads, persistent sessions that remember preferences, and meeting recall that spans weeks—to become defaults rather than upsells (TechCrunch’s model report).

For developers, the upside is straightforward: more ambitious prompts with fewer metered anxieties. Vendors will still need guardrails so long‑running sessions don’t drift. Expect productized context controls—pinning facts, freezing excerpts, or exposing “grounded spans”—to help users steer the model as contexts grow.

Short‑Term Forecast: What Changes Next

In the near term, expect DeepSeek to tune routing patterns using telemetry from consumer traffic: which context lengths dominate, where answers wobble, and how often retrieval is needed to compensate for sparsity. That iteration should produce a second‑wave release that blends dense and sparse paths more intelligently, especially for layout‑heavy documents and multilingual threads.

As developer adoption crosses an early threshold, rivals will likely answer in two ways. Model vendors will expose hybrid attention modes or specialized long‑context variants, pitching steadier tail latency and friendlier pricing on lengthy inputs. Cloud platforms will pilot dedicated long‑context endpoints that co‑locate retrieval, cache management, and sparsity‑friendly kernels so document‑scale prompts are easier to budget and operate.

By late next year, if DeepSeek sustains a measurable cost advantage without a visible quality gap on real workloads, the reference price for long‑context APIs will shift downward across baseline plans. Consumer apps will normalize larger uploads and longer sessions, and enterprise buyers will start insisting that long‑context allowances be included by default. If trade‑offs remain material—missed references or drift in extended chats—the market will bifurcate: sparse‑optimized endpoints for summarization and synthesis where results can be reviewed, with dense attention reserved for high‑stakes reasoning and compliance‑sensitive tasks.

Buyer takeaway: choose sparse‑optimized paths when outputs are reviewable and the workload is document‑heavy or summarization‑first; stick with dense attention for safety‑critical reasoning or when subtle dependencies must never be pruned.