Sparse attention just got a real-world test. DeepSeek released an experimental model designed for long-context operations and claims it can cut API inference bills roughly in half, while simultaneously rolling out a consumer chatbot to validate the economics under live traffic (see TechCrunch on the model and costs in this report and on the product in this companion piece). If those savings hold for real users, long-context features could move from luxury to default—and pricing norms will have to adjust.

Why DeepSeek’s cost‑first bet matters now

Longer context length has become a marquee capability, but one that often carries steep, nonlinear costs as sequences grow. By tying a cost-focused architecture to an end-user product, DeepSeek isn’t just publishing a benchmark; it’s challenging rivals to match price/performance where models are most expensive to serve—extended contexts. The company’s posture is explicit: prove the claim in public, with consumer-scale workloads, and force a conversation about how platforms price long-context endpoints.

The strategic implication is straightforward. If inference cost per token at long contexts falls meaningfully without a quality cliff, product teams will ship features that were previously priced out—multi-document analysis, codebases in-context, and longer-running agents. Providers that can’t trim the attention bill will struggle to keep premium pricing on long windows.

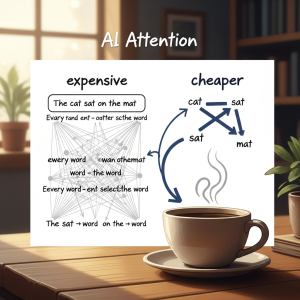

Architecture: how sparse attention reshapes the cost curve

Transformer attention naively scales with the square of sequence length: doubling context can quadruple computation and memory. Sparse-attention variants prune that matrix, attending densely to a small set of global or recent tokens and more sparsely elsewhere. DeepSeek’s mechanism is tuned for long-context operations, seeking sizable inference savings while preserving salient dependencies (TechCrunch report). In plain terms: focus compute on the few tokens that matter most and skip the rest.

There are many recipes under the “sparse” umbrella—block-local windows, periodic global tokens, learned routing, and hybrids that mix dense and structured sparsity. What matters for cost is how much compute and memory the model can skip without harming generalization on tasks users care about. The consumer app is the proving ground: query mixes, document uploads, and session lengths will reveal whether the architecture still feels coherent as contexts stretch (TechCrunch’s product coverage).

Under the hood, memory behavior is as important as math. Long contexts stress high-bandwidth memory, activation checkpoints, and KV cache sizes. If the model’s sparse pattern reduces cache growth and improves locality, p99 latency and energy per token can stabilize even as sessions lengthen. That’s the difference between a lab curiosity and a production-ready long-context endpoint.

Training signals and data that make sparsity stick

TechCrunch’s initial coverage focuses on inference costs and productization rather than pretraining recipes, but the training side will shape how well sparse patterns transfer. If sparsity is present during training—not just toggled at serve time—models can learn to route information into the positions that remain visible, a precondition for stable long-context behavior. Absent those signals, models often look sharp on short prompts but unravel as context length grows. As more technical detail emerges, the key question is whether the data mix and objective nudged the model toward robust routing under sparse visibility.

Compute economics: inference margins drive adoption

The headline is an inference story. Training costs make news, but day-to-day margins live in the serve stack. DeepSeek is explicitly targeting the most expensive tier of inference—long-context sessions that keep KV caches hot and grow quadratically under full attention—and reframing it as a tractable SKU. If the architecture can reduce floating-point operations through structured sparsity while keeping memory footprints in check, providers can offer longer windows without punitive pricing tiers.

Consider a common workload: a user uploads several research PDFs, pastes in notes, and asks for cross-document synthesis. With dense attention, the compute and memory bill climbs quickly and latency gets spiky. With sparse attention, the model can prioritize recently introduced and globally tagged tokens, reduce unnecessary interactions, and keep caches resident. That makes costs more predictable and lets platforms experiment with consumer-friendly pricing for extended sessions.

Live rollout as evaluation protocol

The app functions as a live evaluation protocol. Synthetic benchmarks can probe context recall and needle-in-a-haystack behavior, but they rarely capture the messiness of user sessions: mixed media, retrieval hops, and tool use. DeepSeek’s move brings measurement into production: are conversations coherent across long back-and-forths, does the model retain salient details without repeating itself, and how does quality degrade when users paste in large documents? Those are the questions that determine whether a “half the cost” claim translates to a better user experience.

That real-world feedback loop also hardens the cost story. It’s one thing to publish per-token savings in a lab; it’s another to keep spend down when users push the model off happy paths. The app will expose long-context edge cases—layout-heavy PDFs, code diffs, multilingual notes—that can break brittle sparsity patterns. If the model remains calibrated under this domain shift, the architecture choice will have passed a serious market test.

Positioning and capital: who DeepSeek is competing with

DeepSeek’s posture is unusual for a model lab. Rather than acting as an API wholesaler, it is pushing a combined model-and-app strategy and leaning into a price play at the high-cost end of the market—the long-context tier. That positioning invites a different set of competitors: not only foundation-model incumbents but also mobile-native chatbots and productivity apps that monetize daily usage. The investor story, including backing from China-based investors and a quantitative fund, signals an appetite for rapid commercialization through a consumer interface rather than a slower enterprise-first path, as noted in TechCrunch’s reporting.

For incumbents, the threat vector is clear. If users can get extended context at consumer-friendly prices, enterprise buyers will press their vendors for similar pricing on long-context endpoints. That may push incumbents to surface their own sparse or hybrid attention variants sooner, or to route long windows to specialized models optimized for memory locality and structured sparsity.

Evaluation and failure modes to watch

Sparse attention can fail quietly. Models may overfit to the visible bands of tokens and miss cross-document links, or hallucinate connective tissue when a dependency falls outside the sparse pattern. Long-context recall often decays as chains of reasoning span beyond dense windows. A concrete failure looks like this: a model accurately summarizes two reports but invents a causal link because the key sentence sat outside its dense window. For a credible “half the cost” narrative, we should look for independent reproduction on long-context suites and, more importantly, production metrics: how often do users retry, how many tokens are burned to re-ground the model, and how stable are answers as sessions stretch?

Because sparse patterns depend heavily on implementation details, integration matters. Framework support for KV cache compression, attention-aware operator fusion, and scheduler hints to keep active tokens close to memory is still uneven across stacks. The best architectural choice can be diluted by a leaky runtime; the worst can amplify latency tails. Expect the most robust wins where models, runtimes, and deployment topology are tuned together.

Safety, governance, and access tiers

A lower-cost long-context chatbot will see broader usage, including edge cases and sensitive topics. The governance question is whether the model’s alignment strategy and content filters hold up under extended sessions, where subtle miscalibration can accumulate. TechCrunch frames DeepSeek’s current release as an experiment in cost-efficient attention tied to a consumer interface, not a fully open model drop. That implies the company can adjust access tiers and moderation as it observes behavior.

Enterprise buyers will also scrutinize data handling and jurisdiction. China-based backing will prompt questions about telemetry, retention, and the boundaries between model improvement and user privacy. Clarity on logging defaults, on-device processing, and opt-out controls will influence whether cost advantages can translate into enterprise adoption—especially in regulated sectors.

Competitive dynamics: if cost falls, features expand

If sparse attention reliably lowers spend for long-context use, the feature map shifts. Users will expect persistent memory, multi-document synthesis, and code-aware chat without metered anxiety. App developers will raise context by default, leaning on retrieval and tools to keep token usage efficient. In parallel, platform providers are likely to introduce specialized long-context endpoints that route to sparse-optimized models, with pricing that reflects more predictable memory and compute profiles. That creates a new competitive fulcrum: cost-efficient attention becomes a product feature, not just a research trick.

This is more than a model story; it’s a SKU story. Expect price experiments—bundled long-context sessions, off-peak discounts, and developer credits tied to context budgets—as vendors feel out elasticity. Whoever turns long-context from a luxury feature into a default capability will bank loyalty and usage.

Risks and open questions

Claims of “half the cost” invite three categories of risk. First, quality trade-offs: sparse patterns can degrade gracefully on benchmarks but falter in unstructured, multi-hop real-world queries. Second, systems friction: without mature compiler and runtime support, sparsity savings can be lost to kernel mismatches or cache churn. Third, competitive response: incumbents can ship their own sparse or hybrid attention paths quickly, compressing any pricing moat.

The product tether could be an advantage here. A live app exposes failure modes quickly, enabling targeted adjustments to attention patterns and data curricula. But it also raises the bar: users don’t grade on a curve. If long-context answers drift, word-of-mouth will undercut the cost narrative before the architecture can be refined.

Forecast: near‑term trajectory and likely outcomes

In the coming months, expect DeepSeek to iterate on its sparse-attention recipe using telemetry from the consumer app: which context lengths dominate, where answers wobble, and how often users lean on retrieval to compensate for sparsity. That feedback should inform model routing—when to switch between dense and sparse paths—and yield a second-wave release tuned for the most common long-context patterns.

As developer adoption passes an early threshold, two platform responses are likely. First, rivals will expose hybrid attention modes within their flagship models, positioning them as “smart context” options with steadier p99 latency and lower fees on lengthy inputs. Second, clouds will pilot dedicated long-context endpoints that co-locate retrieval, cache management, and sparse-friendly kernels, making context-heavy apps less brittle.

By late next year, if DeepSeek sustains a measurable cost advantage without a noticeable quality gap on real workloads, pricing pressure will filter into enterprise contracts. Long-context allowances will expand in baseline plans, and teams will design workflows that assume affordable document-scale prompts. If the quality gap proves material, the market will revert to tiered offerings: sparse-optimized models for synthesis and summarization, dense attention reserved for high-stakes reasoning.

Baseline expectation: the cost claim will partially hold under production traffic, enough to set a new reference price for long-context APIs. The biggest wins will come where retrieval and tool use constrain token growth, letting sparse attention carry most of the load. Expect a trickle of comparative trials from early adopters through the next product cycle—and for providers to standardize “smart context” SKUs that make longer windows the default rather than the exception.